Parity is among a growing crop of startups promising organizations ways to develop, monitor, and fix their AI models. They offer a range of products and services from bias-mitigation tools to explainability platforms. Initially most of their clients came from heavily regulated industries like finance and health care. But increased research and media attention on issues of bias, privacy, and transparency have shifted the focus of the conversation. New clients are often simply worried about being responsible, while others want to “future proof” themselves in anticipation of regulation.

“So many companies are really facing this for the first time,” Chowdhury says. “Almost all of them are actually asking for some help.”

From risk to impact

When working with new clients, Chowdhury avoids using the term “responsibility.” The word is too squishy and ill-defined; it leaves too much room for miscommunication. She instead begins with more familiar corporate lingo: the idea of risk. Many companies have risk and compliance arms, and established processes for risk mitigation.

AI risk mitigation is no different. A company should start by considering the different things it worries about. These can include legal risk, the possibility of breaking the law; organizational risk, the possibility of losing employees; or reputational risk, the possibility of suffering a PR disaster. From there, it can work backwards to decide how to audit its AI systems. A finance company, operating under the fair lending laws in the US, would want to check its lending models for bias to mitigate legal risk. A telehealth company, whose systems train on sensitive medical data, might perform privacy audits to mitigate reputational risk.

PARITY

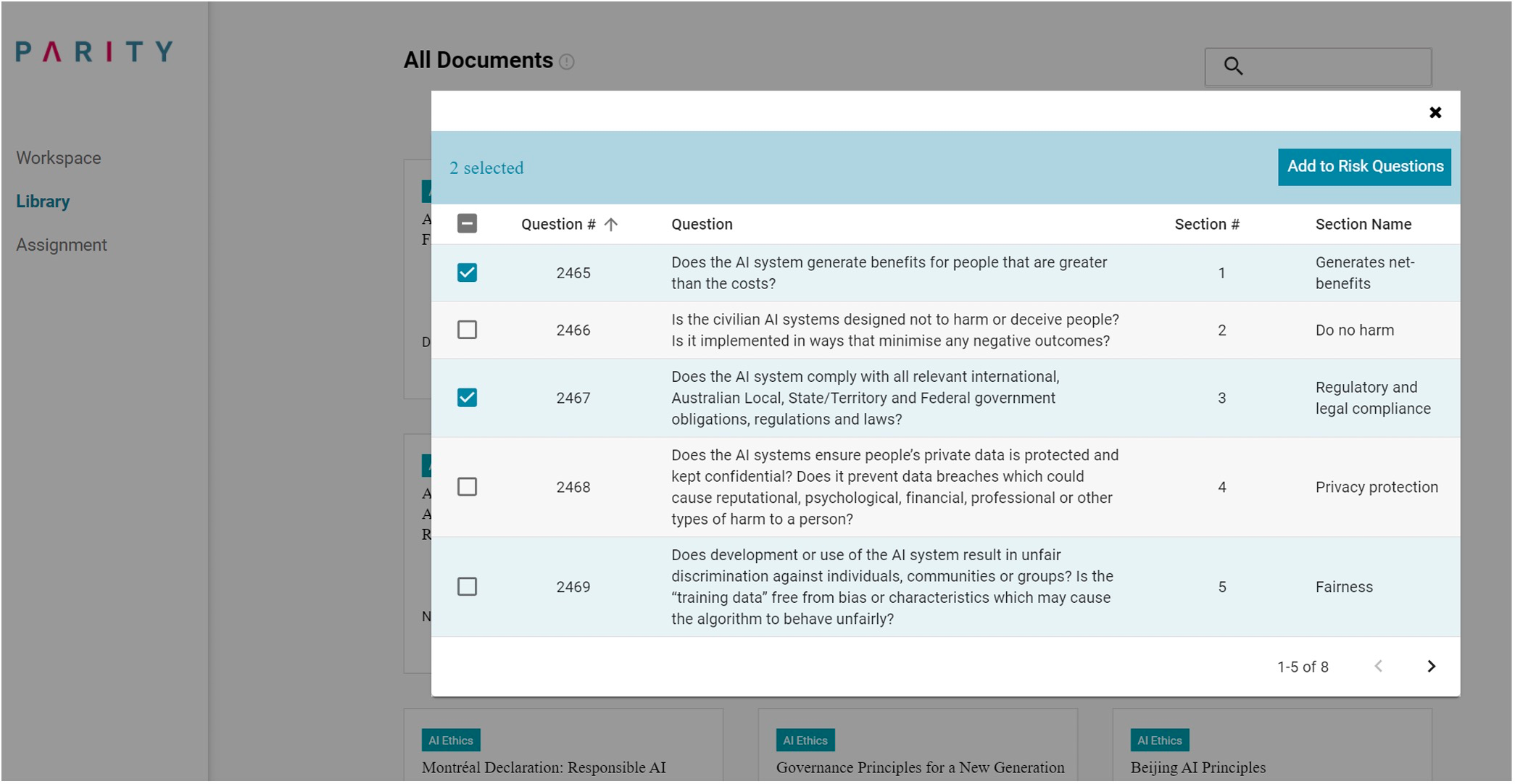

Parity helps to organize this process. The platform first asks a company to build an internal impact assessment–in essence, a set of open-ended survey questions about how its business and AI systems operate. It can choose to write custom questions or select them from Parity’s library, which has more than 1,000 prompts adapted from AI ethics guidelines and relevant legislation from around the world. Once the assessment is built, employees across the company are encouraged to fill it out based on their job function and knowledge. The platform then runs their free-text responses through a natural-language processing model and translates them with an eye toward the company’s key areas of risk. Parity, in other words, serves as the new go-between in getting data scientists and lawyers on the same page.

Next, the platform recommends a corresponding set of risk mitigation actions. These could include creating a dashboard to continuously monitor a model’s accuracy, or implementing new documentation procedures to track how a model was trained and fine-tuned at each stage of its development. It also offers a collection of open-source frameworks and tools that might help, like IBM’s AI Fairness 360 for bias monitoring or Google’s Model Cards for documentation.

Chowdhury hopes that if companies can reduce the time it takes to audit their models, they will become more disciplined about doing it regularly and often. Over time, she hopes, this could also open them to thinking beyond risk mitigation. “My sneaky goal is actually to get more companies thinking about impact and not just risk,” she says. “Risk is the language people understand today, and it’s a very valuable language, but risk is often reactive and responsive. Impact is more proactive, and that’s actually the better way to frame what it is that we should be doing.”

A responsibility ecosystem

While Parity focuses on risk management, another startup, Fiddler, focuses on explainability. CEO Krishna Gade began thinking about the need for more transparency in how AI models make decisions while serving as the engineering manager of Facebook’s News Feed team. After the 2016 presidential election, the company made a big internal push to better understand how its algorithms were ranking content. Gade’s team developed an internal tool that later became the basis of the “Why am I seeing this?” feature.