For all GPT-3’s flair, its output can feel untethered from reality, as if it doesn’t know what it’s talking about. That’s because it doesn’t. By grounding text in images, researchers at OpenAI and elsewhere are trying to give language models a better grasp of the everyday concepts that humans use to make sense of things.

DALL.E and CLIP come at this problem from different directions. At first glance, CLIP (Contrastive Language-Image Pre-training) is yet another image recognition system. Except that it has learned to recognize images not from labeled examples in curated data sets, as most existing models do, but from images and their captions taken from the internet. It learns what’s in an image from a description rather than a one-word label such as “cat” or “banana.”

CLIP is trained by getting it to predict which caption from a random selection of 32,768 is the correct one for a given image. To work this out, CLIP learns to link a wide variety of objects with their names and the words that describe them. This then lets it identify objects in images outside its training set. Most image recognition systems are trained to identify certain types of object, such as faces in surveillance videos or buildings in satellite images. Like GPT-3, CLIP can generalize across tasks without additional training. It is also less likely than other state-of-the-art image recognition models to be led astray by adversarial examples, which have been subtly altered in ways that typically confuse algorithms even though humans might not notice a difference.

Instead of recognizing images, DALL.E (which I’m guessing is a WALL.E/Dali pun) draws them. This model is a smaller version of GPT-3 that has also been trained on text-image pairs taken from the internet. Given a short natural-language caption, such as “a painting of a capybara sitting in a field at sunrise” or “a cross-section view of a walnut,” DALL.E generates lots of images that match it: dozens of capybaras of all shapes and sizes in front of orange and yellow backgrounds; row after row of walnuts (though not all of them in cross-section).

Get surreal

The results are striking, though still a mixed bag. The caption “a stained glass window with an image of a blue strawberry” produces many correct results but also some that have blue windows and red strawberries. Others contain nothing that looks like a window or a strawberry. The results showcased by the OpenAI team in a blog post have not been cherry-picked by hand but ranked by CLIP, which has selected the 32 DALL.E images for each caption that it thinks best match the description.

“Text-to-image is a research challenge that has been around a while,” says Mark Riedl, who works on NLP and computational creativity at the Georgia Institute of Technology in Atlanta. “But this is an impressive set of examples.”

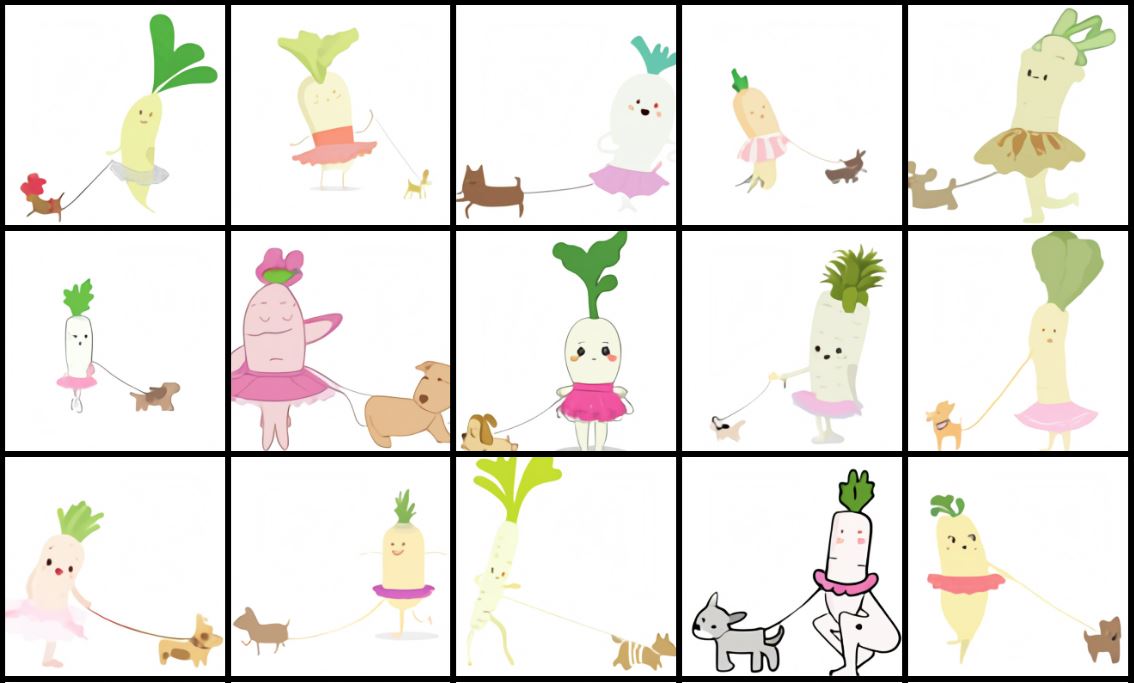

To test DALL.E’s ability to work with novel concepts, the researchers gave it captions that described objects they thought it would not have seen before, such as “an avocado armchair” and “an illustration of a baby daikon radish in a tutu walking a dog.” In both these cases, the AI generated images that combined these concepts in plausible ways.

The armchairs in particular all look like chairs and avocados. “The thing that surprised me the most is that the model can take two unrelated concepts and put them together in a way that results in something kind of functional,” says Aditya Ramesh, who worked on DALL.E. This is probably because a halved avocado looks a little like a high-backed armchair, with the pit as a cushion. For other captions, such as “a snail made of harp,” the results are less good, with images that combine snails and harps in odd ways.

DALL.E is the kind of system that Riedl imagined submitting to the Lovelace 2.0 test, a thought experiment that he came up with in 2014. The test is meant to replace the Turing test as a benchmark for measuring artificial intelligence. It assumes that one mark of intelligence is the ability to blend concepts in creative ways. Riedl suggests that asking a computer to draw a picture of a man holding a penguin is a better test of smarts than asking a chatbot to dupe a human in conversation, because it is more open-ended and less easy to cheat.

“The real test is seeing how far the AI can be pushed outside its comfort zone,” says Riedl.

“The ability of the model to generate synthetic images out of rather whimsical text seems very interesting to me,” says Ani Kembhavi at the Allen Institute for Artificial Intelligence (AI2), who has also developed a system that generates images from text. “The results seems to obey the desired semantics, which I think is pretty impressive.” Jaemin Cho, a colleague of Kembhavi’s, is also impressed: “Existing text-to-image generators have not shown this level of control drawing multiple objects or the spatial reasoning abilities of DALL.E,” he says.

Yet DALL.E already shows signs of strain. Including too many objects in a caption stretches its ability to keep track of what to draw. And rephrasing a caption with words that mean the same thing sometimes yields different results. There are also signs that DALL.E is mimicking images it has encountered online rather than generating novel ones.

“I am a little bit suspicious of the daikon example, which stylistically suggests it may have memorized some art from the internet,” says Riedl. He notes that a quick search brings up a lot of cartoon images of anthropomorphized daikons. “GPT-3, which DALL.E is based on, is notorious for memorizing,” he says.

Still, most AI researchers agree that grounding language in visual understanding is a good way to make AIs smarter.

“The future is going to consist of systems like this,” says Sutskever. “And both of these models are a step toward that system.”